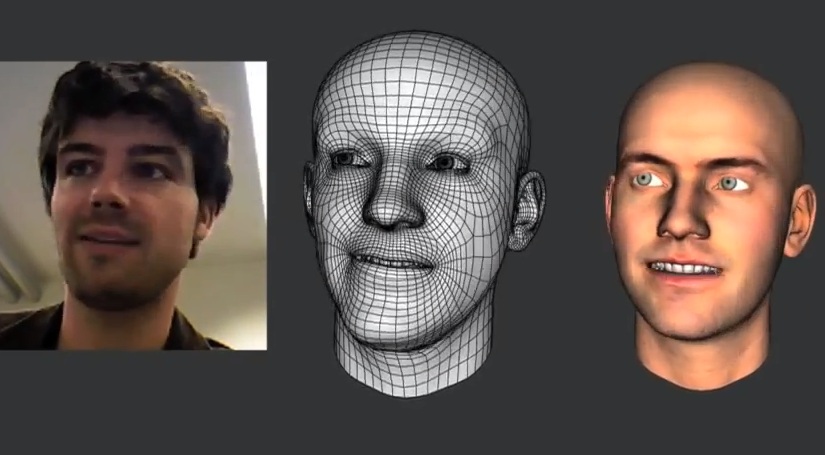

A method for real-time facial animation, comprising: providing a dynamic expression model that includes a plurality of blendshapes receiving tracking data from a plurality of frames in a temporal sequence, the tracking data corresponding to facial expressions of a user estimating tracking parameters based on the tracking data from each of the plurality of frames, the tracking parameters corresponding to one or more weight values of the blendshapes andrefining the dynamic expression model based on the tracking parameters, wherein refining the dynamic. Embodiments pertain to a real-time facial animation system.ġ. The method may further include generating a graphical representation corresponding to the facial expression of the user based on the tracking parameters. The method includes providing a dynamic expression model, receiving tracking data corresponding to a facial expression of a user, estimating tracking parameters based on the dynamic expression model and the tracking data, and refining the dynamic expression model based on the tracking data and estimated tracking parameters. Įmbodiments relate to a method for real-time facial animation, and a processing device for real-time facial animation.

The method may further include generating a graphical representation corresponding to the facial expression of the.

Embodiments relate to a method for real-time facial animation, and a processing device for real-time facial animation.